The recent implementation of the European Union’s Artificial Intelligence (AI) Act marks a significant milestone in the legislative landscape concerning technology. Effective from 1 August, this law intends to regulate artificial intelligence across the EU, addressing the growing concerns regarding the ethical, social, and safety implications of AI systems. As researchers and professors, including Holger Hermanns from Saarland University and Anne Lauber-Rönsberg from Dresden University of Technology, dive into the ramifications of this legislation, programmers may find themselves at a crossroads: How will this law influence their work in practice?

The overarching goal of the AI Act is to safeguard users from potentially discriminatory or harmful AI applications, particularly those in sensitive sectors such as healthcare, employment, and legal applications. This recognition that AI can pose risks is a pivotal development that reflects a maturing understanding of technology’s role in society. Unlike conventional regulatory frameworks, which focus more on product safety and consumer rights, the AI Act emphasizes ethical implications and the operational integrity of intelligent systems.

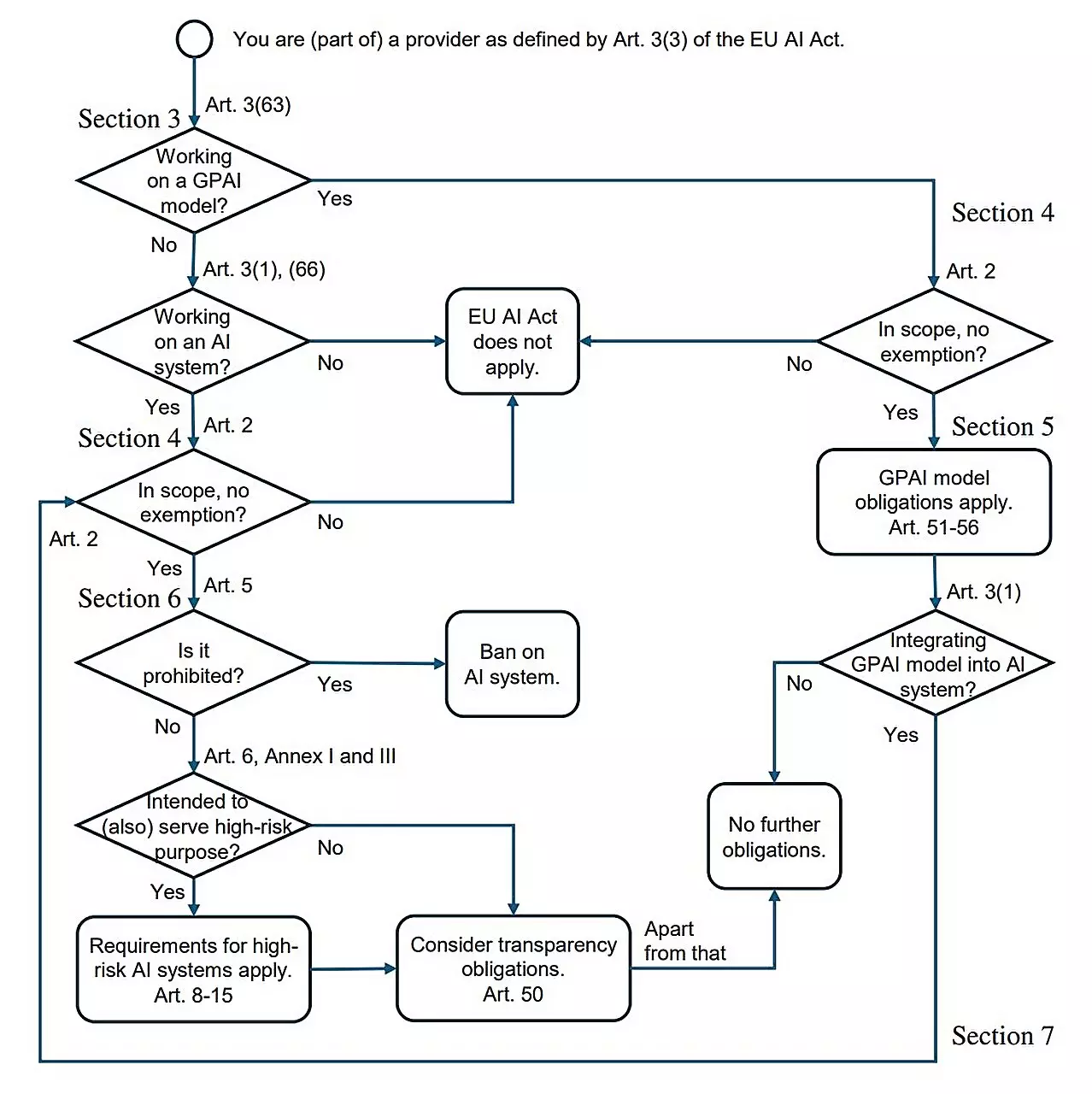

This legislation categorizes AI systems based on their associated risk levels, creating a delineation between high-risk applications, such as those guiding hiring processes or determining financial creditworthiness, and low-risk systems, which may be utilized in entertainment or non-critical online environments. This classification allows for tailored regulations that reflect the potential impact of AI rather than imposing a blanket approach.

For software developers, the primary concern is understanding the specific requirements imposed by the new law, especially as it relates to high-risk systems. Hermanns’ research outlines a crucial element: Although most programmers may not experience immediate changes in their daily tasks, those responsible for developing high-risk AI tools must navigate a stringent set of regulations. For instance, if a developer creates software intended to screen job applications, they must ensure that their coding processes uphold fairness and transparency, avoiding biases that could result from flawed data sets.

The lack of familiarity most programmers have with legislation — compounded by a reluctance to review extensive legal texts — raises a critical issue: How can they efficiently learn what they need to know about compliance? To address this, Hermanns and his colleagues have authored a concise research paper titled “AI Act for the Working Programmer,” aimed at distilling the essential information into accessible guidance.

The definitions around high-risk AI systems are crucial to understanding compliance. As outlined by Sterz, specific requirements must be implemented for systems designed to operate in critical sectors. For example, developers must validate the quality and neutrality of training data to avoid inadvertently perpetuating discrimination within automated decision-making processes. Documentation is paramount—ensuring that there exists a clear record showing how data was handled and processed has become fundamental under the new provisions.

Additionally, AI developers must furnish comprehensive user manuals and logs capable of tracing the system’s operations. This ‘black box’ principle mirrors practices from other high-stakes industries, such as aviation, where accountability and traceability are non-negotiable aspects of operational protocols.

While the AI Act imposes necessary constraints to protect users, it emphasizes that the barriers to innovation in other domains remain low. Activities involving experimental research and development are not precluded, allowing the public and private sectors to explore advancements without fear of regulatory hindrance—at least until they reach the market.

Some experts express optimism that the legislation sets a proactive benchmark for other parts of the world. By pioneering a comprehensive legal structure governing AI, the EU positions itself as a leader in elevating ethical considerations and public safety to the forefront of technological advancements. Hermanns himself believes that this initiative will not stifle Europe’s competitiveness in the global market; instead, it may propel it into a more structured and responsible development phase.

The introduction of the EU AI Act is undoubtedly a remarkable shift in how artificial intelligence will be developed, implemented, and monitored within Europe. While programmers focusing on high-risk systems will need to adapt and adhere to new guidelines, many who engage with low-risk technologies may proceed without substantial disruption.

As the field evolves, the need for dialogue between legislators and technologists is imperative. Only through collaborative efforts can we ensure that innovation flourishes while concurrently protecting civil liberties and safety. The EU AI Act, with its thoughtful formulation, holds the potential to serve as a pivotal reference point, guiding the ethical evolution of AI in a manner that benefits society at large.